We’re a couple of weeks into the development of our content audit tool, and are grappling with a number of challenges. These come in two forms:

- Technical challenges, such as how to crawl a website quickly without affecting the site performance, and how to reverse-engineer the structure of a website from on-page links.

- Interface challenges, such as how to navigate a long list of pages and how to best visually represent the structure.

The optimum solution to many of the issues depends on the size of the website: displaying the structure of a 20 page website requires a different approach to that for a 1,000,000 page website.

What we needed to know, then, was the typical size of an audited website. Our personal experience of audits didn’t provide a large enough sample to extrapolate, so we took to the (virtual) streets to find an answer.

The Facebook Ad. In retrospect the word "amazing" wasn't a great choice. Two tips (this Ad got a 400% better CTR than our other Ad): Put a question in the title, and have a face in the picture

We have a pretty good idea of the type of website that is audited, from our experience, your overwhelming feedback, and the growing list of registered emails that have signed up at the audit tool website. We’re also running a Google AdWord and Facebook Ad, to grow the list of registered emails and get a better idea of the organizations that need it.

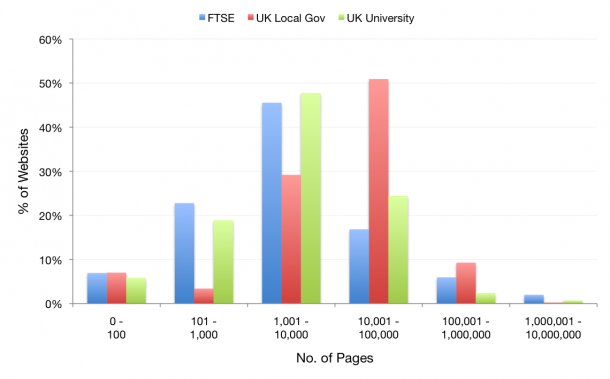

We decided to analyze three types of website: local government websites, university websites and enterprise websites. The Google Web Search API was put into use to return the approximate number of indexed pages (i.e. website size) for 100 FTSE company websites, 442 local UK government websites (e.g. city and district councils) and 291 UK university websites.

The data shows that enterprise and university websites typically have thousands of pages (1,000 to 10,000), and over 50% of local government websites have tens of thousands of pages (10,000 to 100,000). Few websites in our sample – less than 1% – had over a million pages.

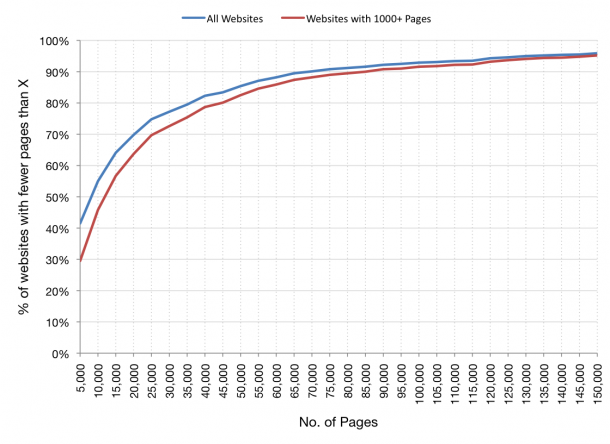

A second graph was created to in order to find the percentiles, i.e. what percentage of websites have less than 10,000 pages? How many have less than 100,000 pages? We also plotted a second set of data that excludes all websites with less than 1,000 pages, as we don’t consider these smaller sites a valid target market for the app.

The data shows that 90% of the target (1000+ page) websites in our sample have fewer than 90,000 pages. 50% have fewer than 12,500 pages.

This is all useful information for the challenges I mentioned at the start of the post. We now know that we don’t have to worry about crawling or visualizing websites with millions of pages – these are rare in our marketplace, and even though the tool will work with them, we shouldn’t optimize the interface for them. We do need the tool to expect tens of thousands of pages per site, although we can optimize for less than 90,000 pages (90% of our sample/market).

Data is crucial to our decision-making process, so that we can deliver the best possible experience to the greatest number of users. We’d love to hear your thoughts on this.